k8s使用动态PV方式挂在ceph

ceph创建pool

[root@ceph-01 ~]# ceph osd pool create kube-rbd 50

pool 'kube-rbd' createdceph查看admin的key,不需要base64转码

[root@ceph-01 ~]# ceph auth get-key client.admin;echo

AQDkfBRignnLHxAAx09AslEoVhcrS82wjMe3lg==把ceph.conf和ceph.client.admin.keyring传到k8s的各个节点中

scp -r /etc/ceph/ceph.conf 10.2.4.238:/etc/ceph

cp -r /etc/ceph/ceph.client.admin.keyring 10.2.4.238:/etc/ceph/创建provisioner控制器

yaml文件如下

[root@master01 ~]# cat k8s_ceph/rbd-provisioner.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

- apiGroups: [""]

resources: ["services"]

resourceNames: ["kube-dns","coredns"]

verbs: ["list", "get"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: rbd-provisioner

apiGroup: rbac.authorization.k8s.io

---

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: rbd-provisioner

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["get"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: rbd-provisioner

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: rbd-provisioner

subjects:

- kind: ServiceAccount

name: rbd-provisioner

namespace: default

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: rbd-provisioner

spec:

selector:

matchLabels:

app: rbd-provisioner

replicas: 1

strategy:

type: Recreate

template:

metadata:

labels:

app: rbd-provisioner

spec:

containers:

- name: rbd-provisioner

image: quay.io/xianchao/external_storage/rbd-provisioner:v1

imagePullPolicy: IfNotPresent

env:

- name: PROVISIONER_NAME

value: ceph.com/rbd

serviceAccount: rbd-provisioner

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: rbd-provisionerkubectl apply -f rbd-provisioner.yaml k8s创建secret

[root@master01 ~]# kubectl create secret generic ceph-admin-secret --type="ceph.com/rbd" --from-literal=key=AQCrBA5i+An3CxAA5XrU/yqC2iTBhoLXOadCCw== --namespace=default

secret/ceph-admin-secret created备注:type必须要和rbd-provisioner.yaml文件的value: ceph.com/rbd保持一致,否则会报错

创建sctorageclass

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ceph-storage-test

provisioner: ceph.com/rbd

reclaimPolicy: Retain

parameters:

monitors: 10.2.4.221:6789,10.2.4.222:6789,10.2.4.223:6789

adminId: admin

adminSecretName: ceph-admin-secret

adminSecretNamespace: default

pool: kube-rbd

imageFormat: "2"创建一下

[root@master01 ~]# kubectl apply -f sc.yaml

storageclass.storage.k8s.io/ceph-storage-test created创建pvc

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: ceph-pvc

spec:

accessModes:

- ReadWriteOnce

storageClassName: ceph-storage-test

resources:

requests:

storage: 2Gi

~ 创建一下

[root@master01 ~]# kubectl apply -f pvc.yaml

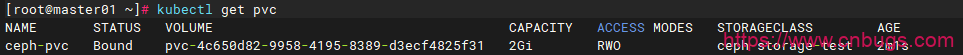

persistentvolumeclaim/ceph-pvc create查看pvc是否创建成功

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。